Genomics data sets are highly sophisticated and multi-layered. Now, possibly more than ever before, we need those data sets to be publicly available, reproducible and transparent. It’s not an easy task but researchers around the globe are finding a way to make it happen…

Eva Chan, bioinformatician at Garvan Institute of Medical Research is an ace at making sense of the massive genomic data sets her research group works with.

An important part of her work, she says, is reproducibility. In order for her analysis to add value, every step must be carefully documented. “Choosing parameters and remembering them” is crucial in the world of bioinformatics and Eva manages to do this while handling big data, complex file types and multiple projects all at the same time.

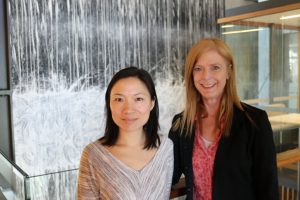

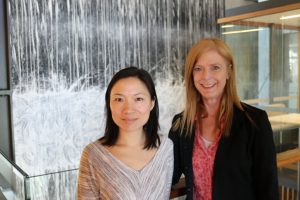

Eva Chan (left) and Vanessa Hayes (right) conduct genomic research at Garvan Institute of Medical Research in Sydney, Australia.

Eva Chan (left) and Vanessa Hayes (right) conduct genomic research at Garvan Institute of Medical Research in Sydney, Australia.A lot of data

Before analysis even begins, genomics data is highly sophisticated and multi-layered. To make things even more complex, several different people handle the data that Eva works with and she works on more than one project at once. Intricate data and intricate workflows call for a high level of organization. Eva conducts her day to day work within LabArchives to keep track of it all.

Sometimes, Eva will spend an entire day analyzing trends and processing raw data. When she’s immersed in her work, she might not talk to anyone else for the entire day. When she does need to collaborate with team members, she can do it via LabArchives in a matter of seconds.

Eva shares links to her work with collaborators and can share big files with them via LabArchives when needed. The files she works with range from standard to highly specific and nearly all of them can be stored and shared with LabArchives.

Complex file types and DOIs

About a year ago, Eva needed to publish a data set that she’d generated with a piece of optimal mapping technology. This technology allows her to identify large-scale genomic rearrangements that are often difficult to “see” from genome sequencing data alone. Sound complicated? It was.

Because the data was referenced in a paper her group was trying to publish, Eva needed to make the data publicly available. Unfortunately, NCBI and other commonly used data repositories didn’t support the file type. LabArchives, however, did.

Eva created a public DOI within LabArchives to share the data set which can now be accessed by any member of the public via the paper. It was a pretty simple solution for a pretty complicated data set.

Reproducibility

Eva often has to repeat certain types of analysis many times over. She creates templates in LabArchives to speed up the process. It’s data’s long term reproducibility, however, that matters most to her.

Eva uses LabArchives to jot down quick ideas and notes for herself so she can remember why she chose one type of analysis over another, for instance, down the track. She records her movements and thought processes alongside her analysis so that every bit of work she does has context. Later, when she needs to refer back to that context, she can find it quickly in LabArchives.

The search function allows Eva to quickly locate data months (or even years) after she’s done working with it. All of her work is documented and indexed with LabArchives revision history feature, so she never has to worry about losing data. If one of her group’s papers were to be reviewed in the future, all data tied to it is securely stored with attribution in LabArchives. Even better still? Many of the group’s papers link directly back to the cited work within LabArchives for complete transparency.

Managing complex data and workflows isn’t an easy task but Eva’s strategies make a reproducible, collaborative and transparent future for all research a very achievable goal.